The Dawning of the Age of Anthropocene

By altering the earth, have humans ushered in a new epoch?

Jessica Stites

Scientists have been clanging the alarm about human-caused climate change, trying to bring around the 33 percent of Americans who don’t believe the earth is warming and the 18 percent of believers in global warming who think the process is “natural.” But as climate scientists race against time to convince the resisters, another branch of science, geology, is taking the tortoise approach. For more than a decade, geologists have been debating whether to officially declare the existence of a new epoch, the “Anthropocene,” to acknowledge that humans are radically reshaping the earth’s surface. Some geologists see the Anthropocene model as a way to widen the lens on human impact on the earth and cut through both the alarmism and the resistance to spur concrete policy solutions.

Geologists divide the earth’s 4.5 billion-year history into eons, which are subdivided into eras, periods and epochs. Each division is marked by a discernable change in the earth’s strata, such as a new type of fossil representing a major evolutionary shift. On this global scale, humans are relative infants, just 200,000 years old. Civilization has sprung up only during the most recent epoch, the relatively warm interglacial Holocene, spanning the past 11,700 years.

But the Holocene’s days may be numbered. In 2000, the late biologist Eugene F. Stoermer and Nobel Prize-winning atmospheric chemist Paul Crutzen made a radical proposal in the International Geosphere-Biosphere Programme newsletter: that the Industrial Revolution’s explosion of carbon emissions had ushered in a new epoch, the Anthropocene, marked by human impact. In 2009, the International Commission on Stratigraphy’s Subcommission of Quaternary Stratigraphy assembled an Anthropocene Working Group, which in 2016 will issue a recommendation on whether to formally adopt the term.

For stratigraphers, this is “breakneck speed,” says University of Leicester paleobiologist Jan Zalasiewicz, the head of the working group. The geological time scale is as fundamental to the discipline as the periodic table is to chemistry. The last official change, when the Ediacaran Period supplanted the Vendian in 2004, came after 20 years of debate and shook up a scale that had been static for 120 years.

Whether or not the term is formalized, Zalasiewicz believes in the Anthropocene’s potential to alter perception, for geologists and non-geologists alike. “ ‘Anthropocene’ places our environmental situation in a different perspective,” he says. “You look at it sideways, as it were.”

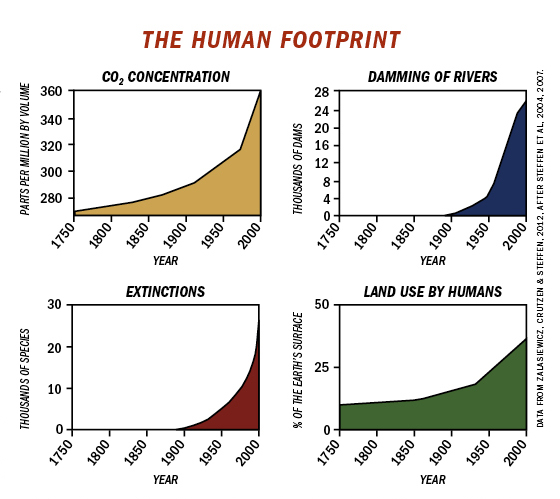

Certainly, you look at it from a broader perspective. Zooming out to an aeonic scale is a sober way to assess the significance of climate change. Overall, human emissions have driven up global temperatures about 0.85 degrees Celsius since the 19th century, according to the UN’s Intergovernmental Panel on Climate Change. Deniers are fond of noting that Earth’s current temperatures are not the highest they’ve been, and that the earth cyclically warms and cools. That’s true. There have been several “hyperthermal” events when carbon dioxide has been released by the ton from the ground or the ocean, and the earth’s temperature has spiked. However, as Zalasiewicz and colleague Mark Williams note in a recent paper in the Italian journal Rendiconti Lincei, carbon is being released today more than 10 times faster than during any of these events. And when the other hyperthermal events began, the earth was already relatively warm, without today’s ice-capped poles that threaten to melt and pump up sea levels. Already, warming has eroded Arctic sea ice and altered the territory of climate-sensitive species — effects potentially stark enough by themselves to declare an Anthropocene.

But even if we set aside climate change, there are still plenty of arguments for an Anthropocene, says Zalasiewicz. Take mineral traces: He calculates that humans have purified a half-billion tons of aluminum, enough to cover the United States in tinfoil. We’ve also made never-before-seen alloys, like the boron carbide used in bullet-proof vests and the tungsten carbide that forms the balls in ballpoint pens. And then there are plastics — more than 250 million tons are now produced annually. Zalasiewicz and his colleagues term these various human-made objects “technofossils.”

Technofossils aren’t the only things that humans scatter; we’ve also spread germs, seaweed, strawberries, sparrows, rats, jicama, cholera … the list goes on. You might see us as bees pollinating the earth or, less flatteringly, dung beetles scuttling our shit around. Today, according to a 1999 Cornell study, 98 percent of U.S. food — both animal-and plant-derived — is non-native.

Then there’s the population explosion. Humans now make up nearly one-fourth of total land vertebrate biomass, and most of the rest is our food animals, with wild animals — the lions, tigers and bears — making up a mere 3 percent. Those proliferating people suck up resources: According to calculations by Stanford geology PhD student Miles Traer, humans use the equivalent of 13 barrels of oil per person every year, and the United States consumes 345 billion gallons of fresh water a day, enough to drain all of Lake Michigan every 10 years.

In the face of all this, few geologists deny that stratigraphers a billion years from now (either human or alien, depending on your level of optimism) will see our current period as a major shift in the geological record. So the main question facing the Anthropocene Working Group is when the Anthropocene began, or in geological parlance, where to plant “the golden spike.” Four possibilities are under consideration.

One camp favors the 1800s, when megacities began to emerge and form unique strata of compressed rubble and trash heaps. London was the first, with a population of 3 million in 1850. With megacities came industrialization and a sudden escalation in CO2 emissions that began to push up global temperatures.

A second group, including many archeologists, argues for an earlier date. University of Virginia paleoclimatology professor William F. Ruddiman calculates that the first Agricultural Revolution, which began some 12,000 years ago, caused greater climate effects than have yet to be seen from the Industrial Revolution. Due to the widespread preindustrial use of fire to clear forests, Ruddiman believes that emissions of greenhouse gases, coupled with the loss of forests’ cooling effect, caused the earth’s temperature to be 1.3 to 1.4 degrees Celsius higher than if humans had never existed, warding off an overdue Ice Age.

However, where to plant the golden spike in this model isn’t entirely clear, since the changes happened over thousands of years. One option would be the mass extinction of large animals in the Americas some 12,500 years ago, believed to be caused by humans. In that case, the Anthropocene would supplant the Holocene.

A third school believes the Anthropocene should be dated to the mid-20th century, when the “Great Acceleration” began and population, urbanization, globalization and CO2 emissions took off. The past 60-odd years have seen a doubling of the human population and the release of three-quarters of all human-caused CO2 emissions. Zalasiewicz favors this hypothesis because of the sharpness of the acceleration and the synchronicity of the changes. The automobile, for instance, proliferated worldwide in less than a century, the blink of a geologic eye. This time period contains a dramatic option for the “golden spike”: the first A-bomb tests of the 1940s, which left radionuclidic traces across the earth that are readily detectable in ice core samples pulled from the poles. Astrobiologist David Grinspoon, a proponent of the nuclear-testing golden spike, writes, “The symbolism is so potent — the moment we grasped that terrible Promethean fire that, uncontrolled, could consume the world.”

The fourth and final camp is made up of the “not yets,” who point out that everything is still accelerating — population, technofossil production, CO2 emissions — with no reason to believe things will stabilize and some reason to expect dramatic upheavals. Many geologists predict that the next two centuries will bring a mass extinction event of a magnitude seen only five times before (e.g., the dinosaur die-off ). A number of drastic changes triggered by climate change may lie in store, according to an IPCC report released March 31 — not only extinctions, but also food and water shortages, irreversible loss of coral reefs and Arctic ice, and “abrupt or drastic changes” like Greenland melting or the Amazon rainforest drying.

It’s here that the conversation veers from the scientific into the panicked, which does not sit well with Mike Osborne, a Stanford Ph.D student in earth sciences and the creator of the “Generation Anthropocene” podcast. More comfortable with data and description than prediction, Osborne eschews apocalyptic thinking, and he doesn’t like the vitriol and politicization of the climate-change debate.

But of course, the issue is political, because it’s inseparable from human choices. A forthcoming peer-reviewed study funded by NASA’s Goddard Space Flight Center warns of the potential for “irreversible” collapse of civilization in the next few decades and stresses that the key factors are both environmental and social. The study says that the enormous consumption of resources is dangerous specifically because it is paired with “the economic stratification of society into Elites [rich] and Masses (or “Commoners”) [poor]” — noting that these two features were common to the toppling of numerous empires, from Han to Roman, over the last 5,000 years.

The issue is also emotional. One side calls the other “alarmist” and “hysterical,” which may be code for “I can’t handle hearing this.” Even for believers, there seems to be a gap between apprehending the problem and believing in a solution. Pew found that 62 percent of Americans understand that climate change is happening and 44 percent believe it’s human-caused, but when Pew asked Americans in another survey what our government’s top priority should be, climate change ranked 19th of 20 issues, just above global trade.

Osborne says he “hesitate[s] to be overly optimistic or pessimistic,” even though, with his first child just born, the earth’s future weighs on his mind. “The Anthropocene’s great utility for me in terms of imagination,” he says, “is that at its best, it comes with a sense of awe: Holy cow, the world is freaking huge and amazing and beautiful and scary.”

By inviting awe rather than — or along with — terror, the Anthropocene may offer a way to grapple with climate change rather than deny it.“The pace of change seems to be ever accelerating, but so does the response. I am loathe to underestimate human ingenuity,” says Osborne. Zalasiewicz and Osborne both think that certain effects of the Anthropocene, like warming and biodiversity loss, warrant environmentalist solutions, such as measures to curb CO2 emissions, and wildlife preserves on both land and sea.

Whatever the working group proposes in 2016 — which must then be affirmed by three higher bodies — Zalasiewicz believes the concept of the Anthropocene is here to stay. “If it wasn’t useful, if it was a catchphrase, it would have quite quickly fallen by the wayside,” he says. “It packages up a whole range of different isolated phenomena — ocean acidification, landscape change, biodiversity loss — and integrates them into a common signal or trend or pattern.”

Perhaps the data-driven, methodical approach of geology is what this debate needs. To Osborne, the Anthropocene is exciting because it forces us to look closely at what’s really happening and challenges our tendency to think in a nature/human divide. In the Anthropocene model, the earth’s workings and fate are intertwined with our own. Indeed, science theorist Bruno Latour goes so far as to say that the Anthropocene could mark “the final rejection of the separation between Nature and Human that has paralyzed science and politics since the dawn of modernism.”

Jessica Stites is Editor-at-large for In These Times.