Imagine: A convicted drunk driver who needs to convince a judge he hasn’t had a drink in years. A father in a custody battle who needs to prove he did not abuse his spouse. A suspected corporate thief who needs to prove his innocence.

These are just some of the people willing to pay $5,000, or more, to expose their brains to scientists to show that their words match their truthfulness.

Knowing for certain when someone is lying is the stuff of dystopian science fiction – and the hope of cops and spies around the world.

And, if some aggressive technology entrepreneurs get their way, the technology will become a reality, coming soon to courts and interrogation rooms near you.

What makes this possible is functional magnetic resonance imaging (fMRI), which has already transformed the world of neuroscience.

Cephos Corporation and No Lie MRI are two American companies at the forefront of using fMRI technology to verify truth in the corporate, government and legal realms.

Cephos, based in Massachusetts, argues that its techniques fit all the legal standards and tests to be admissible in court.

No Lie, based in California, has scores of clients interested in using its process as evidence of their truthfulness in court hearings.

Steven J. Laken, founder of Cephos – whose corporate motto is “Our Business Is the Truth,” and whose mission statement reads, “We believe Truth is among the most valuable of commodities” – claims a 93 percent success rate in determining truth-telling from lying. (Humans are typically able to tell the difference between truth and lies about 50 percent of the time, and traditional lie detector machines average around 85 percent.)

Both companies are confident that within months, judges will allow fMRI results to be admitted in trials. Despite the corporate confidence that Cephos and No Lie exude, legal scholars, neuroscientists and ethicists are much less optimistic.

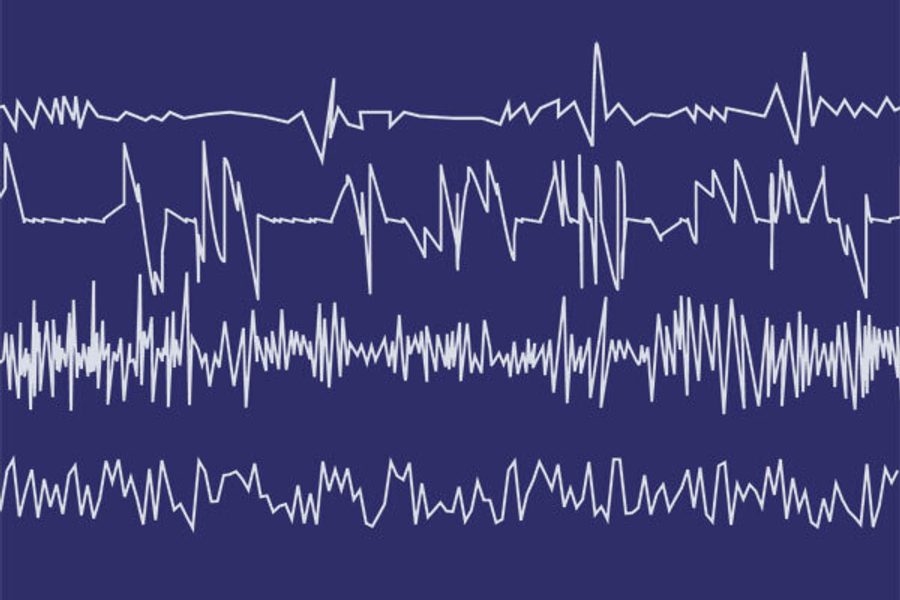

The technology behind the fMRI is relatively simple. Slide a human being wired with electrodes into a tube that is essentially a large magnet. Measure blood flow in the brain and produce an image that is filled with bright and dark spots. The fMRI reads your brain in real time by measuring the flow and use of oxygen.

The theory behind the fMRI’s ability to detect a lie is based on human physiology. It takes more effort to tell a lie than to tell the truth, so, if you are lying, your brain works harder and more oxygen is used. Put the person in an fMRI machine, ask questions and interpret the relatively bright and dark spots.

Throughout the United States, fMRIs are used in labs and hospitals to study brain injuries, states of meditation, physical centers of mental disorders, happiness, lust, emotional states and a plethora of other states of mind. But the attacks of 9/11 gave a whole new urgency to the idea.

Jonathan Moreno, a senior fellow at the Center for American Progress and author of Mind Wars: Brain Research and National Defense, estimates that at least 50 U.S. labs began studying the use of neuroscience and lie detection after 9/11, many of them funded by the Department of Defense.

“There’s enormous pressure coming from the government for this,” says Paul Root Wolpe, a bioethicist at the University of Pennsylvania. “There is reason to believe a lot of money and effort is going into creating these technologies.”

But as quickly as the interest has grown, so, too, have concerns over its implications.

Henry T. Greely, a Stanford University law professor and a leading critic of using fMRI for lie detection, argues that there are three fundamental problems. First, there is no evidence that the technology works. Second, there’s no evidence that lies can be detected. And third, there’s no regulation of the field. In effect, Greely says, “Anyone can promise anything.”

He rejects the claim of high lie-detecting accuracy, largely because the experiments are conducted in controlled settings. Cephos and No Lie base their results on studies with students being dishonest and honest in artificial situations. Greely says such studies tell us nothing about real life.

Ethicists, too, are alarmed. Although the emphasis these days is on the accused proving their innocence, giving credence to the technology could invite misuse or abuse.

The MacArthur Foundation’s Law and Neuroscience Project is one of a series of initiatives across the United States trying to make sense of the burgeoning use of neuroscience in legal matters.

According to Michael S. Gazzaniga, the project’s director, “The risk that science rejected for use in courts – due to the stringent requirements for accuracy – may still be used widely in society for other purposes is always present.”

The traditional lie detector device – which requires hooking the subject up to wires to record pulse, blood pressure and breathing – burst into the world in the 1920s when medical student John Larson and police officer Leonarde Keeler announced they had created a truth machine.

The lie detector test has had a troubled history ever since. Ken Alder, a historian at Northwestern University and author of The Lie Detectors: The History of An American Obsession, describes the concept of using technology to sort out truth and lies as “uniquely American.”

Greely, Wolpe and others detect two major conceptual mistakes in the whole idea of lie detection. First, measuring the actions of the brain does not tell you anything about what the brain is actually thinking. Second, lying should be judged not by machines but by people.

It’s similar to a fundamental dilemma. What does the classic lie detector actually measure? Is a change in pulse and blood pressure evidence of a lie or simply evidence of nervousness?

Alder’s exhaustive exploration of how a technological obsession can go wrong is rife with examples of what Gazzaniga worries about.

Despite the Supreme Court’s 1998 rejection of lie detector technology on the grounds that it was not reliable, lie detectors are still used in job interviews, security clearances and interrogations.

In April 2008, the Pentagon started issuing hand-held lie detectors to soldiers in Afghanistan. It argued that the device’s inaccuracy didn’t matter so long as it gave soldiers an edge in confronting possible terrorists. This “erring on the side of technology” over reality is what scares many observers with the growth of fMRI technology.

Jonathan Marks, a bioethicist at Penn State University who studies interrogation techniques in the war on terror, says the use of fMRIs could, in fact, increase the use of torture.

“[P]eople [could] begin to say, ‘the fMRI picked him out as a terrorist so let us give him a going over in the interrogation room,’ ” says Marks. “Contrary to the view that fMRI will render torture obsolete, it might become a license for further abuse of detainees because its readings will convince people that they have a terrorist on their hands.”

Nightmare scenarios, like the ones Marks suggests, have the American Civil Liberties Union (ACLU) concerned.

Barry Steinhardt, director of the ACLU’s Technology and Liberty Project, says fMRIs need to be kept in check.

“There are certain things that have such powerful implications for our society – and for humanity at large – that we have a right to know how they are being used so that we can grapple with them as a democratic society,” says Steinhardt. “These brain-scanning technologies are far from ready for forensic uses and, if deployed, will inevitably be misused and misunderstood.”