Most Mechanical Turkers Are Young, College-Educated and Making Less Than $5 an Hour

Moshe Z. Marvit

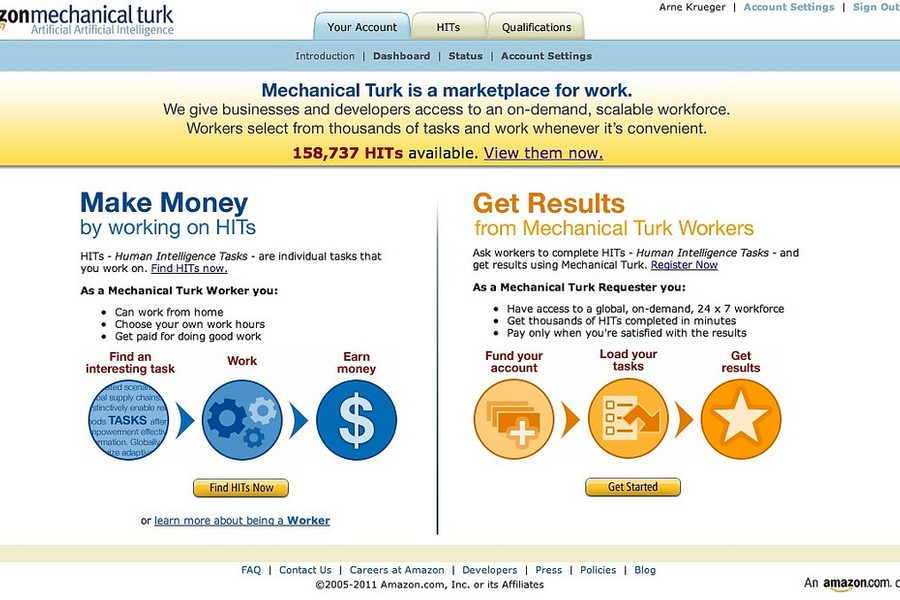

Since 2005, a dispersed group of sub-minimum wage workers has been performing online tasks for pennies through an Amazon-controlled marketplace called Mechanical Turk. These workers tag photos, transcribe audio, take surveys, and do whatever current computer technology cannot. Their work-product is littered across the Internet, and through academic publications, but they have largely remained invisible. Various studies have attempted to take a closer look, but any given study is limited when it must rely on self-reporting from an anonymous workforce.

This week, the Pew Research Center released a major study that fills in some of the gaps in understanding who the “Turkers” are.

The report, titled “Research in the Crowdsourcing Age,” reveals something surprising about these cogs in the digital machine: Most of them are young and college-educated. The majority of them are also making less than $5 an hour performing “short, repetitive microtasks that paid 10 cents or less and could be completed in a few minutes.”

In order to track down Turkers, researchers surveyed thousands of Mechanical Turk workers during various times each day during a sample period. They offered Turkers various amounts, between 5 cents and $2, to ensure that they attracted people who would accept a wide variety of compensation.

Paul Hitlin, the study’s author, tells In These Times that he was surprised by the findings. “Because it is so low paying,” he says, “we hypothesized that the people doing it would have much less education.” The results seem to confirm that in the gig economy, the old rules — of education and experience leading to greater compensation — do not apply.

The study revealed another unexpected facet of crowdworking platforms. While for-profit companies use Mechanical Turk to perform tasks ranging from image recognition to transcription, academics have also found a use for this pool of sub-minimum wage labor. The report found that 36 percent of the unique “requesters” (Mechanical Turk speak for “employers”) came from the academy, compared to 31 percent from business.

Exploitation is academic

In the last several years, it seems the allure of cheap labor has led to a growing reliance on Mechanical Turk for research. In 2015, more than 800 peer-reviewed studies across a wide range of disciplines were published using data obtained from Turkers, according to the study. Of the jobs posted by academics on Mechanical Turk, 89 percent were related to surveys used for research studies.

The use of Mechanical Turk by universities has become so common that many universities provide guidance for researchers using the site. (See here, here, here). Still, paying subjects pennies on the dollar to complete online surveys has raised ethical concerns. Most of these concerns, however, are byproducts of an academic system that relies on cheap labor, such as poor work product, professional participants and lack of worker protections. The focus so far has not been on pay. It’s been on accuracy and privacy.

In 2013, a group of prominent computer science researchers published a paper with the unusually simple title, “Mechanical Turk is Not Anonymous.” Amazon promotes its platform as offering anonymity to Turkers, and researchers often must offer such anonymity to their subjects, so this built-in feature was ideal for many in the academy. However, the computer scientists describe how their team accidentally discovered that “the same 14-character alphanumeric string to uniquely identify a worker for AMT is also used to uniquely identify Amazon customers across all Amazon properties.”

This means that if one were to do an Internet search of a Turker’s 14-character identifier, one would find her Amazon profile, including wish lists, customer reviews, and often real names and photos. Though many universities and researchers had already become regular users of Mechanical Turk for their research by this point, none was aware of this major vulnerability. They chronicled the reaction in the paper, writing, “the initial reaction was one of audible gasps and disbelief, followed by stunned silence. Of all the 30-some highly educated researchers in the room, many of whom had used AMT regularly for years, no one had known.”

Amazon also collects Turkers’ IP addresses and has access to their survey responses. Because of this, the University of California Berkeley’s Committee for Protection of Human Subjects suggests that researchers should only use Mechanical Turk as a recruitment tool, providing a link to another secure site for the actual research.

Furthermore, even though Mechanical Turk has become the primary source for online subjects, many have questioned the accuracy of the data collected when using Turkers for academic research because many of the Turkers will work as subjects across a wide variety of related studies. Therefore researchers are not using random subjects from a heterogeneous pool of workers, but rather professional participants that may answer based on their previous experiences as research subjects.

Despite these problems, the appeal to universities of utilizing cheap labor is too great. There’s little to suggest the economics of this calculus will change anytime soon.

Moshe Z. Marvit is an attorney and fellow with The Century Foundation and the co-author (with Richard Kahlenberg) of the book Why Labor Organizing Should be a Civil Right.