The Dangers of a Data-Driven World

As we increasingly use algorithms that base decisions on past successes, innovation is threatened.

David Sirota

If the recent political era has taught us anything, it has reiterated the enduring truth of George Santayana’s aphorism about memory and duplication. Whether once again watching tax cuts fail to deliver a promised economic boost or witnessing more wars fail to deliver stability, we are reminded that “those who cannot remember the past are condemned to repeat it.”

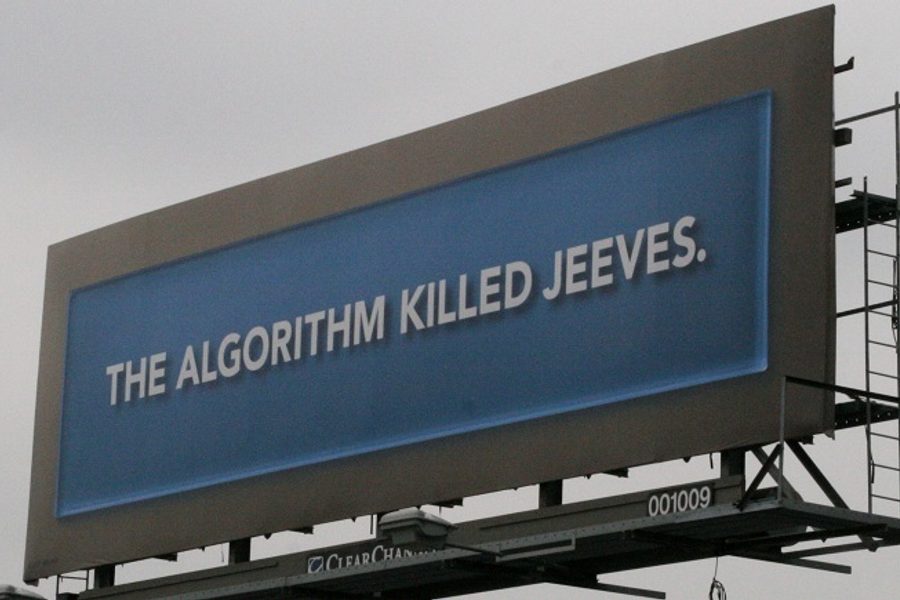

But then, as much as those haunting words are meant as a warning, technology is today coding Santayana’s principle into society’s operating system, as if mimicking history is an admirable objective. Indeed, whether it’s movie studios, record companies, government intelligence agencies or corporate human resources departments, algorithms that use the past to predict — and create — the future are making more and more decisions.

For those employed in creative endeavors, it’s comforting to believe that technology’s use in the information economy begins and ends with the kind of straightforward processes (data entry, dictation, etc.) that require little cognitive analysis and even less artistic thinking. Yet, as Christopher Steiner shows in his mind-blowing new book Automate This, algorithms taking into account past commercial successes are being deployed by the film and music industries to choose which movie and album proposals will be produced. What’s more, an increasing number of the algorithms’ selections have proven profitable.

Steiner also documents the CIA’s seeming preparation for a real-life version of the WOPR from the 1980s flick War Games. Through grants to New York University and the Hoover Institution, the agency is trying to algorithmically quantify the history of past political and military decisions for the purpose of predicting — and perhaps eventually shaping — future events.

Then there is the realm of employment decisions. In the past, the job interview reigned supreme precisely because it was the arena where an employer could personally assess the key skills that cannot be documented by a CV. Now, though, the Wall Street Journal reports, “For more and more companies, the hiring boss is an algorithm.” Using performance data from past employees, these algorithms preference future employees via quantifiable data, wholly ignoring the concept of intangibles.

The upsides of this brave new world are obvious — namely, institutions can replace human decision makers with machines, saving money and, theoretically, getting the same results or even better when the algorithm is properly tweaked.

That said, there are big downsides embodied in the difference between theoretical and actual.

Sure, pop culture industries may be able to use algorithms to produce more reliably lucrative films and music. But with those algorithms based on past successes, aren’t they effectively making it less likely the industry will invest in a new wave of genre-busting and paradigm-shifting talents?

Certainly, CIA algorithms may be able to make predictions based on past events. But with the accelerating pace of global change, isn’t there a big risk that such predictions will miss never-before-seen factors that therefore change the whole geopolitical game?

No doubt, employers’ algorithms about past workforces may help them replicate the same workforce they’ve had for years. But won’t that result in passing over valuable out-of-the-box thinkers whose talents don’t fit within an equation?

The answer to all these questions is a resounding “yes.” That’s because, as much as technology triumphalists and data utopians want us to believe otherwise, we live in a world of both the quantifiable and the incalculable. And no matter what you call the latter, that which cannot be measured remains a factor in all human endeavors.

Pretending it doesn’t runs the risk of fulfilling Santayana’s omen and, in the process, manufacturing an ever more homogenous, destructive and unfair world.

David Sirota is an award-winning investigative journalist and an In These Times senior editor. He served as speechwriter for Bernie Sanders’ 2020 campaign. Follow him on Twitter @davidsirota.